News

Google, TurboQuant, Stocks: The Trillion-Dollar Mix-Up!

Imagine waking up to find that a single academic research paper has wiped billions of dollars off the global stock market. That is exactly what happened in late March 2026. When Google Research published a seemingly innocent blog post detailing a new artificial intelligence algorithm, Wall Street hit the panic button. Within hours, the financial ecosystem experienced a massive semiconductor market crash 2026, heavily impacting the valuation of global memory chip giants.

But what caused this widespread panic? It all comes down to the intersection of Google, TurboQuant, Stocks, and a fundamental misunderstanding of how technology scales. Google’s new algorithm drastically reduces the memory needed to run advanced AI models. Investors immediately assumed that less memory per AI meant fewer memory chips sold overall.

In this article, we are going to unpack this trillion-dollar misunderstanding. We will explore the technical brilliance behind Google AI memory compression, analyze why the market overreacted, and discuss what this means for the future of tech, especially for developers and tech enthusiasts right here in Pakistan.

What Exactly is Google’s TurboQuant?

To understand why the financial world lost its collective mind, we first need to understand the technology. In simple terms, TurboQuant is a revolutionary software breakthrough designed to solve one of the most expensive and frustrating problems in modern AI: memory consumption during the inference phase [1].

The KV Cache Bottleneck Explained

When you chat with a Large Language Model (LLM) like Gemini or ChatGPT, the AI needs to “remember” what was said earlier in the conversation to provide coherent responses. It stores this context in high-speed memory known as the Key-Value (KV) cache.

The problem? As your conversation gets longer, the KV cache balloons in size. In fact, a modest AI assistant can easily see its KV cache swell past 7GB in just 30 turns of dialogue. This Large Language Models memory bottleneck means data centers have to purchase massive, expensive servers packed with High Bandwidth Memory (HBM) just to keep these AI models running without crashing.

Why Traditional Memory was Failing AI

Historically, data centers solved this by simply throwing more hardware at the problem. But physical memory is expensive, uses a lot of electricity, and generates massive amounts of heat. The AI industry was rapidly approaching a physical and financial wall. If AI inference costs didn’t come down, the dream of having an AI assistant in every device would remain financially impossible.

How PolarQuant and QJL Save the Day

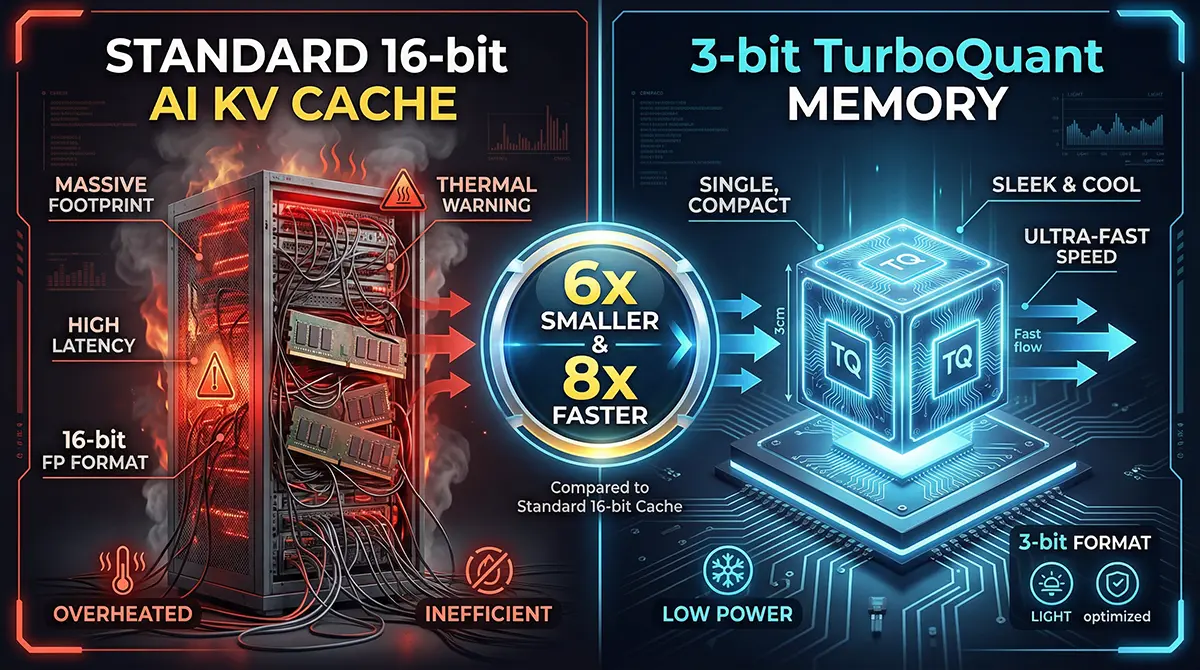

Enter the TurboQuant algorithm explained. Google’s researchers figured out how to compress this massive KV cache down to just 3 bits per value (down from the standard 16 bits), effectively shrinking the memory footprint by 6x without any measurable loss in the AI’s intelligence or accuracy [2].

It achieves this through a genius two-step process:

PolarQuant: Instead of storing complex data points in standard Cartesian coordinates, it converts vectors into polar coordinates.

By separating the magnitude and directional angles, the data becomes much easier to compress.

Quantized Johnson-Lindenstrauss (QJL): A mathematical technique that shrinks high-dimensional data while preserving the essential distances between data points, governed by the principle:

This corrects compression errors using minimal overhead.

The result is a staggering 8x speedup in processing and a 6x reduction in memory needed. It was a technical marvel—and Wall Street hated it.

The Immediate Market Reaction: Why Stocks Plummeted

The moment the paper dropped on March 24, 2026, algorithmic trading bots and human investors alike scanned the headlines. They saw “Google,” “reduces memory by 6x,” and “AI.” The immediate, knee-jerk logical leap was catastrophic for memory manufacturers.

The Tech Sector Flash Crash

If an AI model now requires one-sixth of the memory to run, investors reasoned, then companies building AI data centers will buy one-sixth of the memory chips. The math seemed simple, and the sell-off was brutal and swift. Some analysts dubbed it a DeepSeek moment for Google, recalling when highly efficient Chinese models temporarily spooked hardware investors [3].

A Look at Micron, SanDisk, and Samsung

The Micron and SK Hynix stock drop was the headline story. Shares of major players went into a freefall:

- SanDisk (SNDK): Plunged over 11% in a single day, destroying months of steady gains.

- Micron Technology (MU): Dropped roughly 7%, as fears mounted over their crucial HBM chip sales.

- SK Hynix & Samsung: Slid by 6.2% and 4.7% respectively in the Seoul markets [4].

- Western Digital & Seagate: Both saw significant downward slides of over 4%.

The narrative took hold rapidly: Investing in memory semiconductors was suddenly a toxic proposition because Google had effectively “cured” the AI industry’s memory addiction.

The “Trillion-Dollar Misunderstanding” Explained

Here is the unique insight that the panic-sellers completely missed: efficiency does not destroy demand; it creates it. Wall Street looked at the AI market as a fixed pie. They assumed the world only needed a set amount of AI, and if that AI got cheaper to run, spending would drop. This logic fundamentally misunderstands the history of computing and economics.

The Jevons Paradox in Action

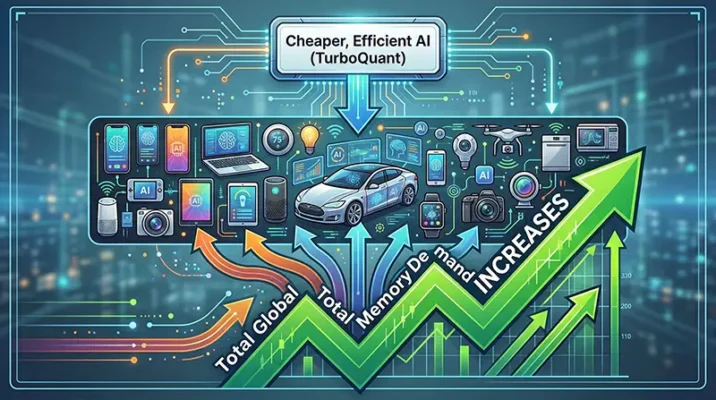

To understand why the market got it wrong, we have to look at the Jevons paradox in AI hardware. In economics, the Jevons paradox occurs when technological progress increases the efficiency with which a resource is used, but the rate of consumption of that resource actually rises because of increasing demand.

Think of it like highway expansion: when you build a wider, more efficient highway to reduce traffic, more people decide to drive because the route is now faster, ultimately leading to more cars on the road.

By solving the KV cache optimization issue, Google didn’t destroy the memory market; they unlocked it.

Why Lower Costs Mean More AI, Not Less Memory

If a cutting-edge LLM previously required a massive $40,000 server to run effectively, only massive hyperscalers like Amazon and Microsoft could afford to deploy it at scale. But with TurboQuant reducing memory requirements by 6x, that same powerful AI can now run on much cheaper, smaller servers—or even directly on high-end consumer hardware.

This future of AI inference costs means developers will build more AI applications. They will deploy AI into smartphones, smartwatches, local enterprise servers, and IoT devices. The Total Addressable Market (TAM) for AI just exploded. Instead of selling a few massive chips to a handful of data centers, memory companies will soon be selling millions of high-density chips for edge devices worldwide. The generative AI hardware demand is shifting, not shrinking.

What This Means for Tech Enthusiasts in Pakistan

You might be wondering: “How does a stock market crash on Wall Street affect me in Karachi, Lahore, or Islamabad?” The answer lies in accessibility and the democratization of technology.

Bringing Powerful AI to Everyday Smartphones

Pakistan has a massive, highly connected youth population that relies heavily on mid-range Android smartphones. Currently, running advanced generative AI natively on a standard mobile device is impossible because the phone simply lacks the RAM. You have to rely on cloud processing, which requires a constant, fast internet connection and costs money.

With PolarQuant and QJL methods drastically shrinking the memory footprint of AI, we are getting closer to a world where a localized, highly intelligent version of Gemini or ChatGPT can run entirely offline on a phone with just 8GB of RAM. This is a game-changer for regions where internet connectivity can be intermittent.

The Democratization of Generative AI

For the booming IT and freelance sectors in Pakistan, high cloud computing costs are a major barrier to entry for startups trying to build AI-driven apps. By slashing the hardware requirements for inference, Google is indirectly slashing the API costs for developers.

Pakistani software engineers, students, and tech entrepreneurs will soon be able to experiment, build, and scale AI applications at a fraction of today’s costs. The “trillion-dollar mix-up” that temporarily crashed Wall Street is actually paving the way for the next wave of global tech innovation.

Quick Takeaways

- The Catalyst: Google Research released “TurboQuant,” an algorithm compressing AI memory usage (KV cache) by 6x using advanced mathematical techniques.

- The Crash: Fearing a drop in hardware demand, Wall Street panicked, causing memory semiconductor stocks (Micron, SanDisk, Samsung, SK Hynix) to plummet.

- The Bottleneck: TurboQuant specifically solves the memory bloat that happens when AI models process long, complex conversations.

- The Misunderstanding: Investors viewed AI hardware demand as a “fixed pie,” ignoring the fact that cheaper AI means wider global adoption.

- The Jevons Paradox: Increased efficiency in AI memory will actually drive up total global demand for chips by pushing AI into edge devices and smartphones.

- The Local Impact: For developers and users in Pakistan, this breakthrough means cheaper cloud computing costs and the eventual arrival of powerful, offline AI on mobile devices.

Conclusion

The intersection of Google, TurboQuant, Stocks will likely be remembered as a classic case study in how financial markets often misunderstand deep technological shifts. The initial shockwave that sent semiconductor stocks tumbling was born out of fear, rather than a calculated look at the future of computing.

While the short-term charts looked terrifying for companies like SanDisk and Micron, the long-term reality is incredibly bright. By curing the AI memory bottleneck, Google hasn’t killed the memory industry; it has ensured that AI can scale infinitely, reaching every corner of the globe—from ultra-secure enterprise servers in New York to a student’s smartphone in Pakistan. The future of AI is smaller, faster, and cheaper, and that means it’s finally going to be everywhere.

References

- LiveMint. (2026, March 26). Semiconductor stocks sink as Google’s new AI tech threatens memory demand. Retrieved from LiveMint financial news.

- The Next Web (TNW). (2026, March 26). Google’s TurboQuant compresses AI memory by 6x, rattles chip stocks. Retrieved from TNW tech reporting.

- The Korea Herald. (2026, March 27). Google’s TurboQuant jolts memory stocks, but demand outlook seen intact. Retrieved from Korea Herald tech sector analysis.

- Barchart. (2026, March 28). Google’s TurboQuant Shakes SanDisk. What Should You Do With SNDK Stock Now? Retrieved from Barchart market analysis.

Sora Dead: Why OpenAI Surrendered AI Video to Google

Discover why OpenAI abruptly killed its hyped AI video generator, Sora, losing a $1B Disney deal, and how Google and ByteDance are taking over the market.

Mar

Google’s $1M AI Gamble: Are Kids Being Raised by Slop?

Google just invested $1 million into AI-generated kids' videos. Discover what "AI slop" is, its hidden dangers for toddlers, and how parents can fight back.

Mar

Nano Banana 2: Google’s Fastest AI Image Generator Guide

Discover Nano Banana 2, Google's new AI image generator. Learn how Pakistani creators can use Gemini Flash for 4K designs, accurate text, and rapid editing.

Feb

Frequently Asked Questions (FAQs)

The KV (Key-Value) cache is the short-term memory an AI uses to remember the context of a conversation. TurboQuant uses a technique called PolarQuant to convert data into polar coordinates, shrinking this cache from 16 bits to just 3 bits per value, making the AI run much faster and cheaper.

Investors feared that if Google’s new algorithm allowed AI models to run on 1/6th of the memory, tech companies would buy significantly fewer memory chips. This panic led to a rapid sell-off of memory stocks like Micron, SanDisk, and SK Hynix.

No. TurboQuant optimizes the inference phase (when the AI is answering your questions). The training phase (teaching the AI from scratch) still requires massive amounts of data processing and will continue to rely heavily on cutting-edge HBM chips.

The Jevons paradox states that as a resource becomes more efficient, its total usage increases. Because TurboQuant makes AI cheaper to run, tech companies will embed AI into millions of new consumer devices (like appliances and phones), ultimately driving up the total global demand for memory chips.

By drastically reducing the hardware requirements to run AI, this technology will lower the cost of premium AI subscriptions, reduce API costs for local tech startups, and eventually allow advanced AI to run locally on affordable, mid-range smartphones without needing a constant internet connection.

Share Your Thoughts!

We hope this deep dive cleared up the confusion behind the recent market madness! What are your thoughts on this? Do you think the stock market overreacted, or are memory companies genuinely in trouble?

Drop your thoughts in the comments below, and if you found this article insightful, don’t forget to share it with your tech-savvy friends and network on LinkedIn or X (formerly Twitter)!