Agentic AI is silently triggering a brutal silicon drought across the global tech landscape, and the impact is already rippling through server farms from Silicon Valley to Karachi. If you thought the massive GPU shortages of the early 2020s were intense, brace yourself for the new compute crisis of 2026. This time, the target isn’t just graphics processors—it is the foundational brain of every computer: the CPU.

We have officially transitioned from chatbots that simply answer questions to autonomous AI agents capable of planning, executing, and managing complex, multi-step workflows. While traditional generative AI models relied heavily on GPUs to process massive datasets in parallel, the rise of task-oriented AI has fundamentally shifted the hardware bottleneck. Today, executing an intelligent, multi-agent workflow requires immense sequential logic, memory management, and orchestration—workloads that rely entirely on CPUs.

For tech professionals, software houses, and businesses across Pakistan, understanding this shift is no longer optional. With cloud computing costs soaring and hardware becoming scarce, adapting to the realities of Agentic AI is critical for survival. This comprehensive guide breaks down why this new breed of AI is hoarding processors, how it impacts our local IT ecosystem, and what you can do to future-proof your infrastructure.

Decoding the Silicon Drought

The Shift from GPUs to CPUs

For the better part of a decade, the artificial intelligence infrastructure narrative was strictly one-dimensional: build larger clusters of faster GPUs. During the generative AI boom, GPUs were the undisputed kings because their architecture is specifically designed for parallel computing—crunching millions of calculations simultaneously to train massive language models.

However, Agentic AI introduces a completely different operational paradigm. According to recent infrastructure analyses by major hardware providers like AMD and networking leaders like Ciena, we are witnessing a rapid “repatriation” of CPUs into AI cluster architectures. In 2024, AI inference clusters often operated on an 8:1 GPU-to-CPU ratio to eliminate the “CPU tax.” Today, because autonomous agents require constant data preparation, conditional logic branching, and dynamic API tool execution, that ratio is rapidly narrowing. In some advanced multi-agent data centers, hardware configurations are moving toward a 1:1 ratio just to keep up with the orchestration demands.

Why 2026 is the Tipping Point

Why is this happening right now? The year 2026 marks the critical transition of autonomous systems from experimental laboratory concepts to enterprise-grade production tools. Gartner recently predicted that 40% of enterprise applications will embed AI agents by the end of 2026, a massive leap from less than 5% in 2025. As these systems transition from assistive tools to autonomous decision engines, the sheer volume of sequential processing required to manage them is stretching existing global CPU inventories to their absolute limits, culminating in a verifiable silicon drought.

What Exactly is Agentic AI?

Generative AI vs. Agentic AI

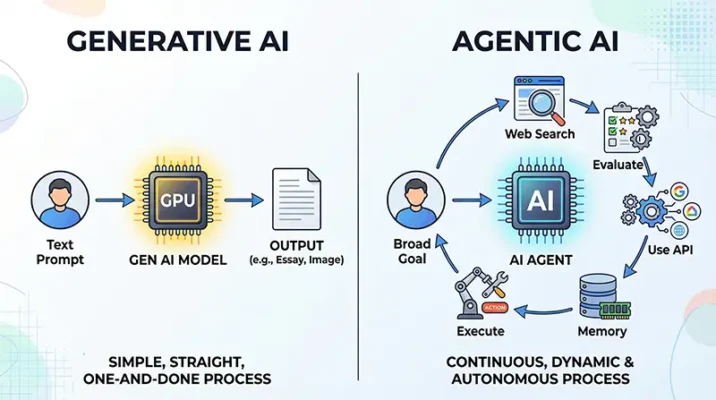

To understand the hardware crisis, we must understand the software evolution. Traditional Generative AI is highly capable but fundamentally reactive. You input a prompt, and the model uses its GPU-powered neural network to generate an output (text, image, or code) and then stops. It is a single, isolated transaction.

Agentic AI, on the other hand, is proactive and goal-driven. It refers to artificial intelligence systems that can accomplish specific, complex objectives with minimal or no human supervision. If you tell a generative model to “plan a marketing campaign,” it will write a text document outlining a plan. If you give the same prompt to an Agentic AI system, it will research current market trends on the web, draft the copy, open your CRM software, schedule the emails, and dynamically adjust the send times based on real-time open rates.

How Autonomous Agents Make Decisions

The defining trait of these systems is their “agency.” They utilize advanced design patterns like ReAct (Reasoning and Acting), reflection, and multi-agent collaboration. When presented with a goal, an autonomous AI agent breaks the problem down into sub-tasks. It hypothesizes solutions, tests them, reviews the outcomes (error checking), and iterates until the goal is achieved. This constant “thinking” and “doing” requires step-by-step, sequential logic—the exact processing style that CPUs were engineered to handle.

The Hidden Compute Cost of AI Agents

Multi-Agent Orchestration and Logic

The real compute drain occurs when we move from single agents to multi-agent orchestration. Think of this as a digital corporate office. You don’t just have one AI; you have an AI Project Manager communicating with an AI Researcher, who passes data to an AI Coder, whose work is checked by an AI Quality Assurance agent.

Managing the communication protocols, task allocation, and conflict resolution between these distinct models requires an orchestration layer (often built on frameworks like LangChain or AutoGen). This orchestration layer operates almost entirely on CPUs.

Continuous Loops and Background AI Processing

When an agent encounters a roadblock, it doesn’t give up; it loops back and tries a different tool. This continuous loop generates massive amounts of background AI processing. A single user prompt that consumes 50 tokens of text can easily snowball into a 50,000-token background job as the agent recursively queries databases, reads documentation, and formats data.

The Role of Memory and State Management

Unlike a standard search query, an autonomous agent must maintain a “state” or memory of what it has already done. Reading and writing to vector databases to retrieve contextual history (episodic and semantic memory) is an I/O (Input/Output) intensive task. CPUs act as the traffic directors here, pulling data from storage, managing the cache, and ensuring the GPU has the exact context it needs to generate the next logical step. As context windows grow to 32K tokens and beyond, this memory management becomes a heavy computational burden.

Impact on Pakistan’s Tech Ecosystem

Software Houses and Freelance Hubs

Pakistan’s IT sector is a vital economic engine, with IT exports crossing the $3.2 billion mark recently. Cities like Lahore, Karachi, and Islamabad are home to thousands of software houses and top-tier freelancers operating on the bleeding edge of tech. However, the rise of Agentic AI presents a unique challenge for this ecosystem.

Local developers are increasingly shifting from building simple wrapper apps around OpenAI’s API to deploying custom autonomous agents for international clients. Because these multi-agent systems require continuous backend processing to remain “always on,” local servers and developer machines are struggling to keep up. The traditional core i5 or i7 laptops that were sufficient for web development are now bottlenecking when trying to run local agentic loops.

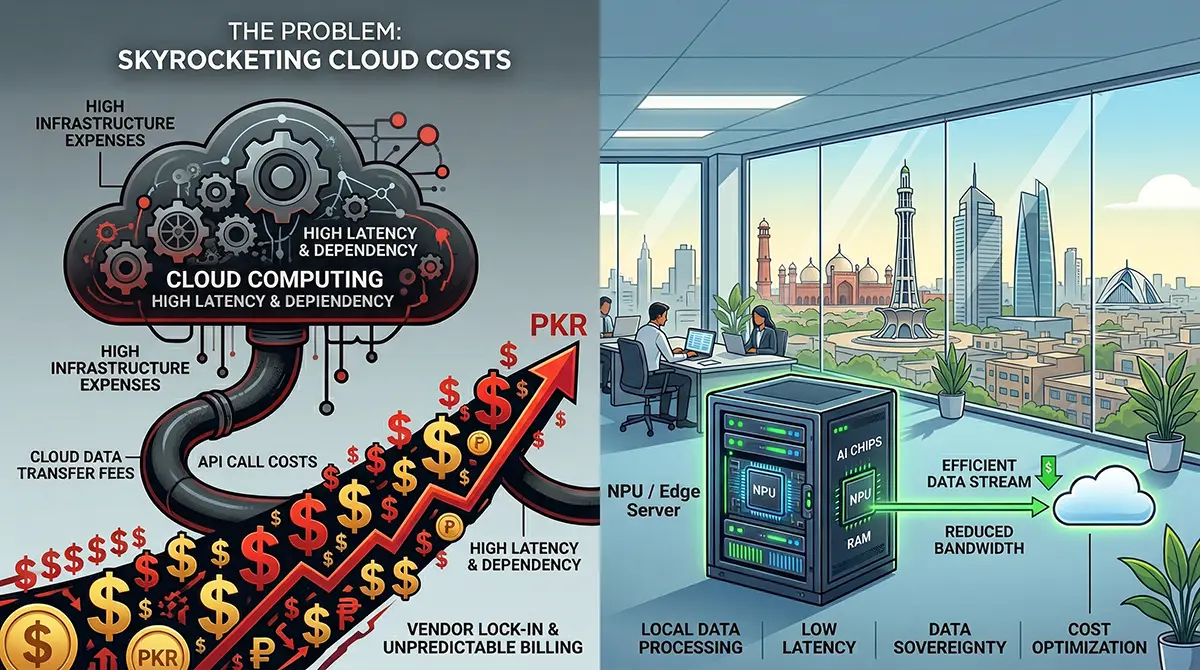

Skyrocketing Cloud Computing Costs

The most severe impact of the silicon drought is being felt in cloud billing. Pakistan’s cloud adoption market is projected to reach approximately $180 million in 2025/2026, heavily reliant on providers like AWS, Microsoft Azure, and Google Cloud.

As global demand for CPU-heavy compute instances skyrockets to support background AI processing, the cost of cloud computing is surging. For Pakistani tech firms dealing with currency devaluation against the US Dollar, these rising costs are a double-edged sword. Recent industry analyses indicate that local organizations are experiencing average cloud cost overruns of up to 23%. Running an autonomous AI agent 24/7 on an AWS EC2 instance is vastly more expensive than hosting a traditional web application, forcing local CTOs to drastically rethink their cloud architecture and adopt strict FinOps (Financial Operations) practices.

Real-World Examples of the CPU Eaters

Autonomous Coding Assistants

We are far past the days of GitHub Copilot simply auto-completing a line of code. In 2026, systems like “Devin” and various open-source SWE-agents operate as fully autonomous software engineers. You can give them a GitHub issue, and they will clone the repository, read the documentation, spin up a secure sandbox, write the code, run unit tests, debug their own errors, and submit a pull request. The heavy lifting here—navigating directories, compiling code, and parsing terminal outputs—is a masterclass in CPU utilization.

Customer Service Swarms

Gartner predicts that Agentic AI will autonomously resolve 80% of common customer service issues without human intervention by 2029. We are already seeing the groundwork today. Enterprise customer support now utilizes “swarms” of specialized agents. When a customer emails a complaint, a triage agent reads it, an investigative agent queries the company’s SQL database to check the order status, a policy agent ensures the proposed solution complies with company rules, and a communication agent drafts the reply. This complex, multi-system coordination operates seamlessly, but it voraciously consumes CPU cycles in enterprise data centers.

Surviving the Drought: Future-Proofing Your Tech Setup

Embracing Local AI Deployment

To insulate against rising cloud costs and global CPU shortages, tech companies must optimize how they deploy AI. One of the primary survival strategies is shifting toward local AI deployment. By utilizing smaller, highly specialized Language Models (SLMs) instead of massive LLMs, developers can run efficient agents directly on edge devices or localized company servers, drastically reducing expensive API calls and cloud reliance.

Edge Computing and NPUs

The hardware industry is responding to the silicon drought by integrating Neural Processing Units (NPUs) directly into consumer and enterprise CPUs. NPUs are specialized hardware accelerators designed to handle specific AI tasks efficiently without draining the main processor’s power. For Pakistani IT professionals upgrading their infrastructure in 2026, investing in NPU-equipped hardware is no longer a luxury; it is a necessity. Offloading routine AI inference compute to an NPU frees up the CPU to handle the complex orchestration and logic routing that multi-agent systems demand.

Quick Takeaways

- The Paradigm Shift: AI has moved from reactive generation (GPUs) to proactive, autonomous task execution (CPUs).

- The New Bottleneck: Agentic AI requires heavy sequential logic, state management, and orchestration, causing a massive spike in CPU demand and a global silicon drought.

- Multi-Agent Complexity: Modern AI systems deploy teams of specialized agents that must communicate with each other, multiplying the background compute required.

- Impact on Pakistan: Surging global cloud computing costs, combined with currency fluctuations, are causing severe budget overruns for local software houses and freelancers.

- Strategic Adaptation: Surviving the compute crisis requires adopting local AI deployment, utilizing Small Language Models (SLMs), and investing in hardware equipped with NPUs.

Conclusion

The evolution of Agentic AI represents one of the most significant leaps in technology since the invention of the internet. We are moving closer to the artificial general intelligence stepping stones, where software doesn’t just assist us—it operates independently on our behalf. However, this profound capability comes at a steep infrastructural cost.

The brutal silicon drought we are witnessing is a testament to the fact that computing architecture must fundamentally change. For the tech-oriented people of Pakistan—from startup founders in Karachi to enterprise architects in Lahore—ignoring this shift is a direct threat to profitability and competitiveness. By understanding the heavy compute toll of multi-agent orchestration and proactively shifting toward optimized cloud governance and edge computing, you can turn this silicon crisis into a strategic advantage.

Are you ready to optimize your infrastructure for the autonomous future? Start by auditing your current cloud expenditures and evaluating your team’s readiness to deploy smaller, localized AI agents today.

References

- IBM Research (2026). What is Agentic AI? – Explores the foundational shift from generative models to autonomous systems capable of executing multi-step goals.

- Gartner Press Release (2025/2026). Gartner Predicts Agentic AI Will Autonomously Resolve 80% of Common Customer Service Issues Without Human Intervention by 2029.

- MachineLearningMastery (2026). 7 Agentic AI Trends to Watch in 2026. – Details the rise of multi-agent orchestration and protocol standardization.

- AMD Industry Blog (2026). Agentic AI Brings New Attention to CPUs in the AI Data Center. – Outlines the shifting GPU-to-CPU ratios and the critical role of CPUs in AI inference and workflow management.

- Sherdil IT Academy (2025/2026). State of Cloud Adoption in Pakistan: Industry Analysis. – Highlights the $180M cloud market trajectory in Pakistan and the urgent need for cost governance amid rising compute demands.

THE SCALPER SWARM: Bots Are Hoarding All the DDR5 RAM (And You Are Paying the Price)

DDR5 RAM prices in Pakistan have doubled due to AI demand and scalper bots. Learn why memory is scarce and how to buy smart during the 2026 shortage.

Mar

OpenAI AI Agents: The “Clawdbot” Fumble That Handed Sam Altman the Future

Discover how OpenAI poached the GitHub viral creator Peter Steinberger after Anthropic's legal threat. Explore the future of AI agents in 2026.

Feb

Clawdbot (Moltbot) Explained: The ‘Claude AI’ Agent Taking Control of WhatsApp

Discover Clawdbot (Moltbot), the new open source Claude AI agent that connects to WhatsApp. Learn about its root access, automation capabilities, and the security risks involved.

Jan

Frequently Asked Questions (FAQs)

Generative AI produces static outputs (like text or images) based on a single prompt. Agentic AI consists of autonomous AI agents that can break down complex goals into steps, use external tools (like web browsers and APIs), and operate in continuous loops to complete tasks without constant human oversight.

While training AI models requires GPUs for parallel processing, running autonomous agents (AI inference compute) requires sequential logic, memory management, and multi-agent orchestration. These tasks rely heavily on CPUs, causing a massive spike in global demand for high-performance server processors.

The increased global demand for CPU compute has driven up cloud computing costs. Pakistani software houses and startups relying on platforms like AWS or Azure are facing significantly higher operational expenses (often experiencing overruns of 20% or more), amplified by local currency dynamics.

Lorem ipsum dolor sit amet, consectetuer adipiscing elit, sed diam nonummy nibh euismod tincidunt ut laoreet dolore magna aliquam erat volutpat.

Developers can reduce costs by adopting local AI deployment, using highly optimized Small Language Models (SLMs) instead of large models for routine tasks, leveraging edge computing, and utilizing hardware with dedicated NPUs to handle background AI processing efficiently.

We Want to Hear from You!

The shift toward autonomous AI is fundamentally changing how we write code, do business, and manage infrastructure. How is your team handling the rising costs of cloud compute and AI deployment in Pakistan?

Drop a comment below with your strategies, or share this article with your network on LinkedIn and Twitter to keep the conversation going! What autonomous agent do you wish you had working for you right now?