Picture this: You are trying to finish up some remote work or cook dinner in your busy home in Lahore or Karachi, and to buy a few minutes of peace, you hand your toddler a tablet. It is a universal modern parenting survival tactic. But have you ever paused to actually look at what they are watching? What used to be simple, human-created cartoons has rapidly morphed into a bizarre digital landscape. Welcome to the era of “AI slop”—a term that has taken the tech world by storm.

Recently, GOOGLE made a controversial move that has tech analysts and child safety advocates equally fired up. Through its AI Futures Fund, the tech giant invested $1 million into Animaj, a children’s media startup that heavily relies on artificial intelligence to generate content. While $1 million might seem like a drop in the bucket for a massive corporation, it represents a massive paradigm shift. It is the first direct investment of its kind, officially signaling that AI is the new frontier for kids’ entertainment.

This article will dive deep into what this investment means for the tech ecosystem, what “AI slop” actually is, how it affects early childhood development, and what Pakistani parents and tech enthusiasts need to know to navigate this brave, and sometimes bizarre, new digital world.

The $1 Million Google Investment Shaking Up Kids’ Media

When GOOGLE announced its backing of Animaj in March 2026, it wasn’t just another routine venture capital deal. It was a clear signal of intent regarding the future of AI-generated kids videos. The investment, funneled through the company’s AI Futures Fund, brings a relatively modest cash injection of $1 million, but more importantly, it unlocks the vault to some of the most powerful generative AI tools on the planet.

What is Animaj and Why Did Google Back It?

Animaj is a digital animation studio that positions itself as a “next-generation media company.” Its core business model revolves around acquiring existing children’s intellectual properties (like Pocoyo or Maya the Bee) and using advanced artificial intelligence to scale them globally at breakneck speed. By utilizing proprietary tools like “Sketch-to-Pose”—which allegedly cuts animation time from 14 hours down to just six—Animaj has managed to accumulate over 22 billion views across its affiliated YouTube channels.

For the tech-oriented people of Pakistan who follow startup scaling, this is a masterclass in aggressive digital expansion. By backing Animaj, Google is essentially placing a bet on a highly automated future for content creation. Under this new deal, Animaj gains exclusive, early access to Google’s premier video-generation system, Veo, alongside the multimodal model Gemini and the image-generation technology Imagen. Google’s AI Future Funds director touted this partnership as a “blueprint for the future” of responsible children’s media.

The Double-Edged Sword of Generative Tools

However, this partnership has ignited a firestorm of criticism. Child safety advocates and digital wellness groups, such as Fairplay, argue that by backing a company whose channels target infants, Google is inadvertently legitimizing and supercharging a highly problematic content ecosystem. While Animaj claims to use generative AI tools for animation responsibly—insisting that human oversight reviews every episode—critics view this as a slippery slope.

The concern is that this validation will encourage thousands of other less-scrupulous creators to leverage AI video generator tools to flood the market. In a tech landscape where rapid deployment often outpaces ethical regulation, many fear that this investment prioritizes watch time and scalability over developmental value, setting a dangerous precedent for what the youngest, most vulnerable viewers are exposed to on screens every day.

What Exactly is “AI Slop” and Why Is It Everywhere?

If you spend any time tracking digital trends, you might know that “AI slop” was crowned the Word of the Year by Merriam-Webster in 2025. But what exactly does it mean, and why has it become the bane of the YouTube AI slop problem?

The Rise of Brain Rot Content on YouTube

“AI slop” refers to low-quality, mass-produced digital content generated primarily by artificial intelligence. It is designed not to educate, inspire, or even tell a coherent story, but simply to game algorithms and hijack human attention. Imagine a video where a baby is inexplicably crawling into a pre-launch space rocket, or where rhinos suddenly morph into quadcopter drones amid a cacophony of distorted nursery rhymes. This is the reality of the content currently flooding the screens of toddlers worldwide.

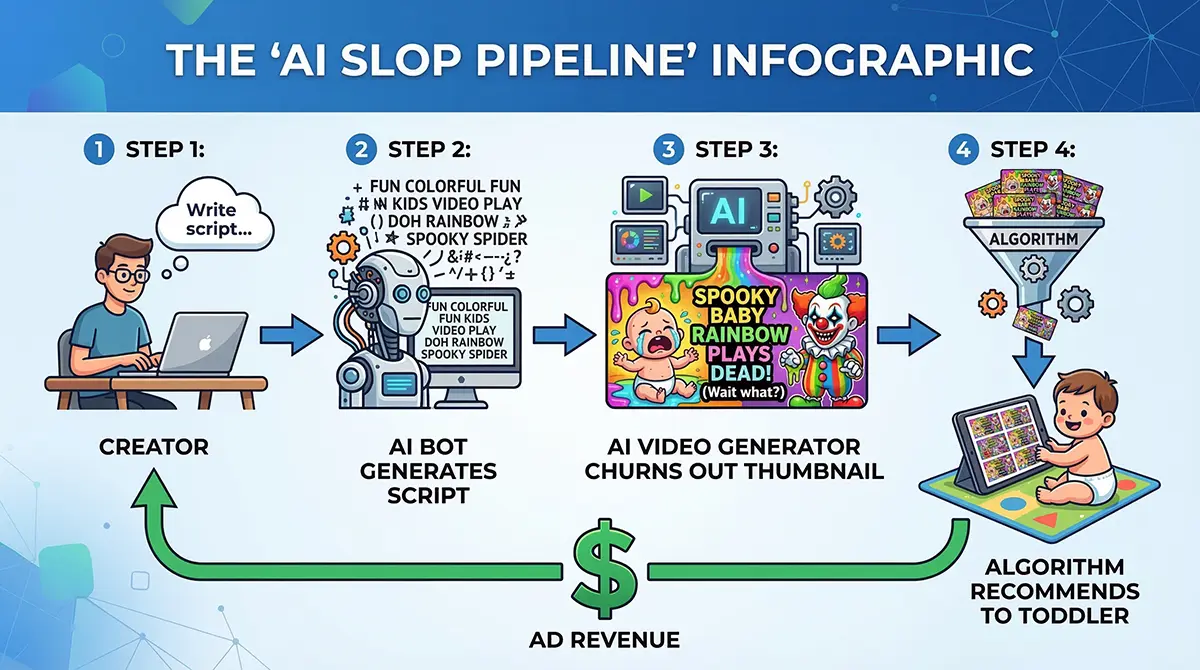

For Pakistani tech enthusiasts familiar with the creator economy, the mechanics behind this are obvious. It is a volume game. Using AI, a creator can ask a text bot to write repetitive lyrics, plug them into a video generator, and churn out dozens of vibrant, hyper-stimulating videos in a single afternoon. These videos feature loud noises, erratic movements, and a complete lack of narrative logic—often referred to as “brain rot content.”

The Word of the Year That No Parent Wants to Hear

The defining characteristics of AI slop are superficial competence, asymmetric effort, and mass producibility. It looks just real enough to pass a casual glance, but upon closer inspection, it is an eerie, uncanny valley of disjointed images. A recent New York Times investigation found that when watching popular, non-AI kids’ content on YouTube, the recommendation algorithm quickly pipelines viewers into a stream where 40% of the subsequent videos are AI-generated slop.

This low-quality digital content creates a massive issue for platforms. The algorithm cannot differentiate between a beautifully crafted educational video and an AI-generated fever dream of dancing animals; it only knows what keeps eyes on the screen. Consequently, AI slop is everywhere because it exploits the attention economy flawlessly, filling up feeds with digital clutter that prioritizes speed and quantity over substance.

The Hidden Dangers: How AI Slop Impacts Early Development

While adults can easily scroll past a poorly generated AI image, the stakes are significantly higher when the target audience is still in diapers. The influx of AI media consumption in infants is not just an annoyance; child development experts warn it could be actively harmful.

Sensory Overload and Screen Time Concerns

The human brain during the first two years of life is wiring itself to understand reality, physics, language, and social cues. When a child is exposed to AI slop, they are bombarded with hyper-stimulating visuals that defy logic and natural pacing. Child safety advocates, like Rachel Franz from the Young Children Thrive Offline program, explain that these “mesmerizing” videos displace crucial time that children need to spend playing, socializing, and interacting with their caregivers.

Unlike human-created educational shows that often use a slower pace and call-and-response queues to encourage interaction, AI slop is designed to induce a trance-like state. It is a barrage of looping animations and sensory overload. Research consistently links greater impact of screen time on early development to poorer performance on developmental measures, including attention spans and emotional regulation. When reality is replaced by hallucinated AI visuals where gravity and logic don’t apply, it fundamentally disrupts a child’s understanding of the world.

What Pediatricians in Pakistan Need Parents to Know

In Pakistan, where large, multi-generational households are common, the tablet often serves as a digital babysitter. However, pediatric guidelines globally, including those from the American Academy of Pediatrics, strongly advise avoiding digital media for children younger than 18 to 24 months.

When you introduce AI slop into this equation, you add a layer of unpredictability. The distorted voices, incorrect physical proportions, and erratic scene changes can cause anxiety and overstimulation. A child safety advocates warning recently highlighted that investing in platforms that serve this content is essentially investing in harming babies. The lack of meaningful narrative in AI-generated dross means kids aren’t learning language or empathy; they are merely being conditioned to stare at flashing lights.

The Profit Motive: Why Creators Target the Under-2 Crowd

To understand why YouTube is rife with this content, one must look at the economics of the creator industry. Why are highly advanced neural networks being used to generate nonsense videos of colorful blobs and singing trucks? The answer lies in the easiest demographic to monetize.

The Ultimate Uncomplaining Consumers

Babies and toddlers are the ultimate, uncomplaining consumers of digital media. According to recent demographic data, YouTube usage among children under two has skyrocketed. This demographic does not care about plot holes, they do not leave negative comments, and most importantly, they do not click the “Skip Ad” button.

For creators looking to make a quick profit with minimal effort, this is a goldmine. A creator can use AI video generator tools to do 95% of the work. They set up automated pipelines that generate scripts, synthesize voices, and render animations while they sleep. This entirely automated grift has resulted in channels amassing millions of subscribers and billions of views in a matter of months. Some of the fastest-growing channels globally are strictly showing AI-generated content aimed at infants.

Monetizing Nonsense: A Look at the Economics

This rapid monetization creates a race to the bottom. In developing tech markets, including segments within our local tech ecosystem in Pakistan, the temptation to generate foreign revenue through YouTube monetization is high. However, when the barrier to entry drops to zero, the platform is flooded.

While Google has stated it demonetizes accounts that post “low quality clutter,” the sheer volume of AI slop makes it a game of digital whack-a-mole. Creators use tactics to bypass these filters, ensuring their high-volume, low-effort videos continue to generate ad revenue. This profit motive ensures that until platforms structurally change how content is rewarded, the under-2 crowd will remain the prime target for synthetic media exploitation.

Balancing Innovation with Child Safety: The Road Ahead

The debate around GOOGLE‘s $1 million investment is not just about a single company; it is a proxy war for the future of the internet. Can generative AI actually be used to create high-quality, enriching content for children, or is the technology inherently biased towards producing slop?

Can AI Be Used Responsibly for Children’s IPs?

Animaj’s co-founder, Sixte de Vauplane, argues that they are acutely aware of the AI slop problem. He contends that their studio is proving how AI can be used in a “very good way” to scale beloved franchises like Maya the Bee. In theory, AI could reduce production budgets, allow for rapid global dubbing, and make diverse, educational content more accessible. When guided by skilled human UX designers and child-psychology advisory boards, AI has the potential to be a powerful co-pilot in digital storytelling.

However, the current reality falls short of this ideal. Even with human oversight, the pressure to produce content at scale often leads to compromised quality. The challenge lies in distinguishing between AI used as a tool for creative enhancement versus AI used as an engine for mass-produced distraction.

Closing the Platform Policy Loopholes

A major hurdle in this balance is existing platform policies. Currently, YouTube’s synthetic media disclosure rules require creators to label realistic deepfakes of humans, but they explicitly exempt animated content. This massive loophole means that AI-generated cartoons do not carry a warning label, leaving parents entirely in the dark.

Child advocates are demanding transparent labeling, default-off autoplay features, and robust age safeguards. If GOOGLE truly wants to champion responsible AI, it must enforce strict provenance tags and monetization limits on hyper-stimulating, algorithmically generated content. Until these policy gaps are closed, the responsibility of filtering out the slop falls entirely on overwhelmed parents.

Protecting Your Children from Digital Clutter

As the digital landscape becomes increasingly saturated with AI-generated media, parents and guardians in Pakistan must take proactive steps to protect their children’s developing minds. Waiting for tech giants to self-regulate is no longer a viable strategy.

Practical Steps for Pakistani Parents

First and foremost, awareness is your best defense. You must learn to spot the “tells” of AI slop. Look for surreal, disjointed plots, strange physical transformations (like a cat turning into a car), and distorted, robotic audio.

- Turn off Autoplay: This is the most crucial step. Autoplay is engineered to keep kids in algorithmic rabbit holes. By disabling it, you break the cycle of endless, unsupervised scrolling.

- Curate, Don’t Delegate: Instead of relying on YouTube’s recommendation algorithm, curate specific, high-quality channels. Look for slower-paced, human-created content that encourages call-and-response.

- Demand Transparency: While platforms lag in synthetic media disclosure, you can use tools like YouTube Kids with strict parent-approved-only settings to ensure no unverified content slips through.

Reclaiming Human Interaction Over Algorithms

Ultimately, the most effective antidote to AI slop is human connection. Pediatricians emphasize that screen time should ideally be a co-viewing experience. Sit with your child, ask them questions about what they are watching, and bring the digital content into the real world.

In a world where algorithms are designed to maximize engagement through sensory overload, prioritizing offline play, socialization, and reading is a radical act of care. Technology and AI are not inherently evil, but they must remain tools that serve us, rather than autonomous systems that raise our children.

Quick Takeaways

- Google’s Big Bet: Google invested $1 million in Animaj, an AI kids’ animation studio, sparking massive debate over the future of children’s media.

- Rise of AI Slop: “AI Slop” (Word of the Year 2025) refers to mass-produced, low-effort, surreal AI videos that are currently flooding YouTube.

- Targeting Babies: Creators target children under two because they are a captive audience that doesn’t complain or skip ads, making it highly lucrative.

- Developmental Dangers: Experts warn that the hyper-stimulating nature of AI slop can cause sensory overload and negatively impact brain wiring in early childhood.

- Policy Loopholes: Current YouTube policies do not require AI disclosure labels for animated content, leaving parents in the dark.

- Parental Action: Parents must disable autoplay, heavily curate viewing lists, and prioritize human co-viewing to combat digital clutter.

Sam Altman’s Sloppy AI Deal Sparks ChatGPT Mass Exodus

Sam Altman admits OpenAI's new Pentagon deal was "sloppy." Discover why millions are deleting ChatGPT and what it means for AI data privacy in Pakistan.

Mar

Nano Banana 2: Google’s Fastest AI Image Generator Guide

Discover Nano Banana 2, Google's new AI image generator. Learn how Pakistani creators can use Gemini Flash for 4K designs, accurate text, and rapid editing.

Feb

Conclusion

GOOGLE’s $1 million gamble on Animaj has pulled back the curtain on a quiet but massive shift in how media is produced for the youngest generation. While artificial intelligence holds incredible potential to democratize content creation and streamline animation, the current reality of the internet paints a much darker picture. The unchecked proliferation of “AI slop” proves that when powerful generative tools meet the ruthless economics of the attention economy, quality is the first casualty.

For the tech-oriented communities and families across Pakistan, this serves as a critical wake-up call. We are witnessing an era where algorithms are inadvertently taking on the role of digital babysitters, feeding our children a diet of surreal, hyper-stimulating, and ultimately hollow content. The impact on early brain development and the loss of genuine human interaction are costs too high to ignore.

The platforms must be held accountable to close policy loopholes and enforce strict labeling. But until then, the power lies in your hands. Take control of the remote, dive into the settings, and reclaim your child’s digital diet.

Call to Action: Don’t let an algorithm dictate your child’s reality. Tonight, take 5 minutes to audit your child’s YouTube history, turn off autoplay, and replace one screen-time session with a physical book or a puzzle.

References

- Mashable (March 2026): ‘Harming babies’: Child safety group blasts Google’s investment in AI content for kids. Detailed reports on the $1 million investment in Animaj and the subsequent backlash from Fairplay regarding infant safety.

- Bloomberg (2025/2026): Investigations into the creator economy, highlighting how YouTube creators are serving ‘AI Slop’ to babies to capitalize on uncomplaining demographics and ad revenue.

- The New York Times (March 2026): YouTube’s A.I. Slop Problem … for Kids. An extensive analysis proving that algorithms pipeline young viewers from safe content into streams heavily populated by AI-generated videos.

- Gizmodo (March 2026): Google Invests $1 Million in Company That Makes AI YouTube Videos for Kids. Coverage on Animaj’s use of Google’s Veo video models and the sheer volume (22 billion views) of their content network.

- Wikipedia / Merriam-Webster (2025): Definition and cultural context of “AI slop,” crowned the 2025 Word of the Year, detailing its mass producibility and superficial competence.

Frequently Asked Questions (FAQs)

The YouTube AI slop problem refers to the flood of low-effort, mass-produced videos generated by artificial intelligence. These videos often feature bizarre, surreal visuals and nonsensical audio aimed primarily at young children to generate ad revenue through high watch times.

Google invested $1 million in Animaj through its AI Future Funds to explore how generative AI tools can scale existing children’s intellectual properties. Google sees this as a potential blueprint for the future of digital media, though critics argue it normalizes low-quality digital content.

Experts warn that AI-generated brain rot content provides intense sensory overload without any educational or narrative value. This hyper-stimulation can disrupt attention spans, hinder language development, and displace crucial time meant for real-world play and social interaction.

Currently, there is a major loophole. While YouTube requires synthetic media disclosure for realistic deepfakes of real people, it explicitly exempts animated content. This means creators can mass-produce AI cartoons without adding labels or warnings for parents.

You can protect your children by turning off the autoplay feature, utilizing the “Approved Content Only” setting on YouTube Kids, actively co-viewing content with them, and learning to spot the erratic visual and audio “tells” of AI generator tools.

Join the Conversation!

What are your thoughts on AI being used to create content for children? Have you noticed strange, AI-generated videos popping up in your kids’ feeds? Drop a comment below and share your experiences! If you found this article helpful, please share it with other parents, educators, and tech enthusiasts in your network to help spread awareness about digital safety.

Question for you: Do you think tech platforms should completely ban AI-generated content for children under 5, or just label it clearly? Let us know!