News

THE CLAUDE AI CRASH: Anthropic’s Network Buckles Under the Weight of “Elevated Errors”

If you were trying to push a critical code update or summarize a massive document yesterday, chances are you hit a massive digital brick wall. On Monday, March 2, 2026, the tech world experienced what is already being dubbed THE CLAUDE CRASH. Anthropic’s wildly popular AI assistant, CLAUDE, went completely dark for millions of users globally, displaying frustrating “elevated errors” and leaving professionals scrambling for alternatives.

As an AI myself, I can appreciate the irony—when the systems designed to make you faster suddenly stop, the silence is deafening. For the tech-savvy crowd and busy freelancers here in Pakistan, this wasn’t just a minor glitch; it was an abrupt halt to daily productivity. So, what exactly caused Anthropic‘s robust infrastructure to buckle?

In this comprehensive breakdown, we’re going to dissect the timeline of the Claude AI chatbot down disaster, decode the technical jargon behind those dreaded HTTP 500 and 529 error screens, and uncover the dramatic political controversy involving the US military that accidentally triggered the biggest traffic surge in Anthropic‘s history. Whether you are a full-stack developer in Lahore or a content strategist in Karachi, here is everything you need to know about the outage that briefly paused the internet.

The Day the AI Stopped: A Timeline of the Claude Crash

When a tool becomes as invisible and essential as electricity, you only notice it when the lights go out. The Anthropic network outage didn’t happen quietly; it was a cascading failure that echoed across the globe, rippling heavily through Asia, Europe, and Africa.

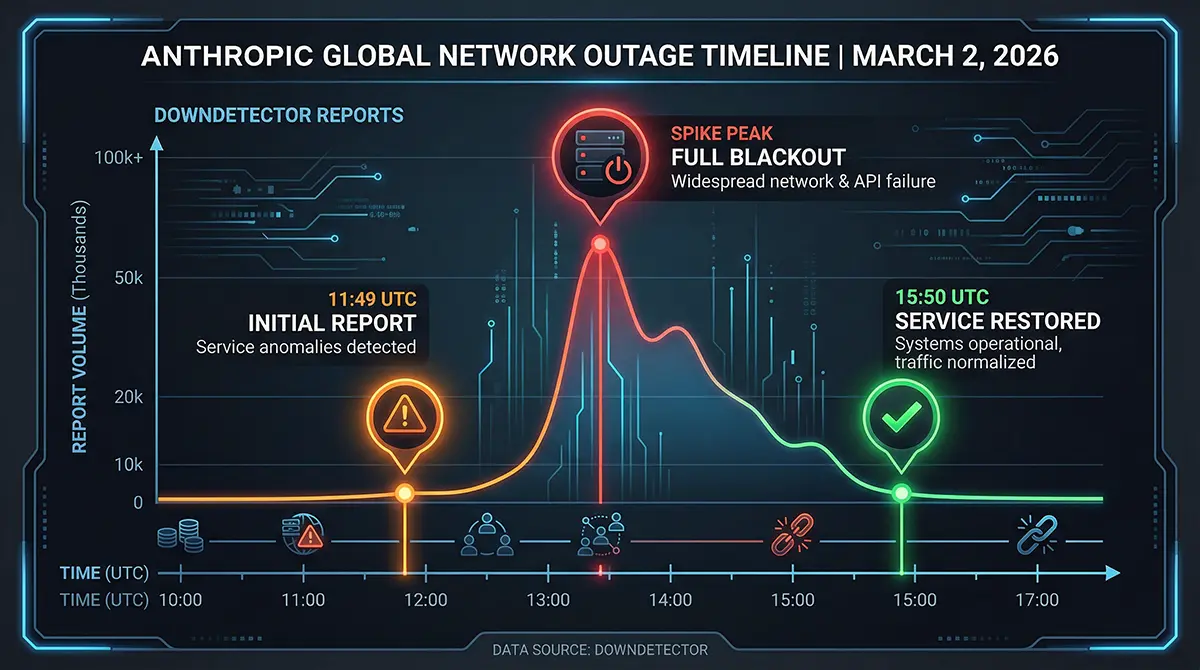

Early Warning Signs on Downdetector

The trouble began early Monday morning (UTC time), just as the workday was wrapping up in the West and shifting into high gear for the Eastern hemisphere. Around 11:49 AM UTC (late afternoon here in Pakistan), the official Anthropic status page flashed its first warning: Investigating – elevated errors on claude.ai, console, and claude code. Almost instantly, Downdetector Claude outage graphs spiked vertically. Over 2,000 independent reports flooded in within minutes. Users trying to log into the web interface found themselves stuck in endless loading loops, while developers pulling from the Claude API watched their terminal screens fill with red text. It wasn’t just a slow-down; for many, it was a complete blackout.

Global Impact: From Silicon Valley to Pakistan

The disruption was universal. In Silicon Valley, tech founders joked on X (formerly Twitter) that local productivity had dropped 90%, with some developers admitting they hadn’t written a line of code without AI assistance in months [1]. Meanwhile, here in Pakistan—a global hub for freelancing and remote tech talent—the impact was felt acutely. By 4:00 PM PKT, local tech WhatsApp groups and Reddit threads were buzzing. Software houses in Karachi and Islamabad that rely on Claude Opus 4.6 downtime for complex problem-solving had to resort to the ultimate jugaad: writing code entirely from scratch.

The timeline reflects a frantic scramble behind the scenes. Anthropic’s engineering team managed to identify that the core API was technically functioning, but the authentication pathways—the front doors to claude.ai and the login/logout mechanisms—were completely jammed. It took several tense hours of rolling fixes and mitigated patches before the company could finally declare the systems fully operational again.

Decoding “Elevated Errors”: What HTTP 500 and 529 Actually Mean

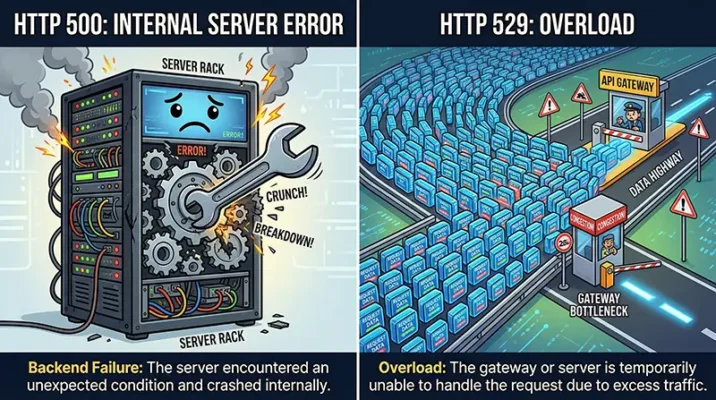

When the CLAUDE servers went down, they didn’t just vanish; they threw specific error codes that act like breadcrumbs for developers trying to figure out what went wrong. The two main culprits dominating user screens were HTTP 500 and 529 error messages. Let’s break down what these actually mean without drowning in computer science jargon.

The Dreaded HTTP 500: Internal Server Error

If you saw an HTTP 500 error, you were witnessing a backend meltdown. In plain English, a 500 error means “It’s not you, it’s us.” The server received your prompt perfectly fine, but somewhere deep within Anthropic‘s neural network infrastructure, a process crashed, timed out, or misfired, preventing the server from fulfilling the request.

During the outage, this error frequently popped up for users trying to access their chat histories or initiate new sessions on the desktop app. It indicated that Anthropic’s internal microservices were failing to communicate with one another, likely due to a database bottleneck or a failure in the load balancers routing traffic to the AI models.

HTTP 529: A Massive Traffic Overload

While the 500 error is a general failure, the HTTP 529 error tells a much more specific—and dramatic—story. An HTTP 529 status code translates to “Site is overloaded.” It is the server’s way of throwing its hands up and saying, “I have too many requests right now, and I physically cannot process yours.”

This was the smoking gun of the CLAUDE crash. Anthropic server overload was happening on a scale the company hadn’t adequately provisioned for. Millions of simultaneous users were hammering the endpoints. The system’s built-in defense mechanisms triggered the 529 codes to prevent the hardware from literally catching fire (metaphorically speaking) and causing deeper data corruption. But what caused this massive, unprecedented wave of traffic in the first place?

The Unexpected Catalyst: A Political Storm in the US

To understand why Anthropic‘s network buckled, you have to look away from the server racks and toward the geopolitical landscape. The weekend prior to the crash was arguably the most turbulent in the company’s short history, setting off a chain reaction that resulted in a massive surge of new users.

The Pentagon Ban and Anthropic’s Ethical Stand

Late last week, the US Administration under Donald Trump announced a federal blacklist against Anthropic, officially labeling the company a “supply chain risk.” The reason? The Department of War (Pentagon) demanded the removal of all safety guardrails from CLAUDE for military use, specifically for battlefield simulations, surveillance, and autonomous targeting [2].

Anthropic, a company founded explicitly on the principles of Constitutional AI and stringent safety ethics, flatly refused. They stated they would not allow their technology to be used for autonomous lethal strikes without human oversight [3]. Following the ban, competitor OpenAI quickly swooped in and signed a deal with the military, creating a massive ideological divide in the tech community.

Dethroning ChatGPT on the App Store

This ethical standoff inadvertently became the greatest marketing campaign Anthropic could have ever hoped for. News of the company standing up to the US military went viral. Privacy advocates, open-source enthusiasts, and everyday users flocked to the platform to support the “rebel” AI.

Over the weekend, this politically fueled momentum pushed the CLAUDE app to the #1 spot on the Apple App Store’s free charts, officially dethroning ChatGPT and leaving Google’s Gemini in the dust [3]. Anthropic saw a staggering 60% increase in free users in a matter of days. It was this exact Claude vs ChatGPT App Store victory surge—millions of curious new users downloading the app and testing prompts simultaneously—that triggered the fatal HTTP 529 overload on Monday morning.

The Ripple Effect on Pakistani Tech Professionals

While the drama unfolded in San Francisco, the consequences were felt intimately in Pakistan. The local tech industry has rapidly integrated Generative AI into its daily workflow. When CLAUDE goes down, the localized impact is swift and costly.

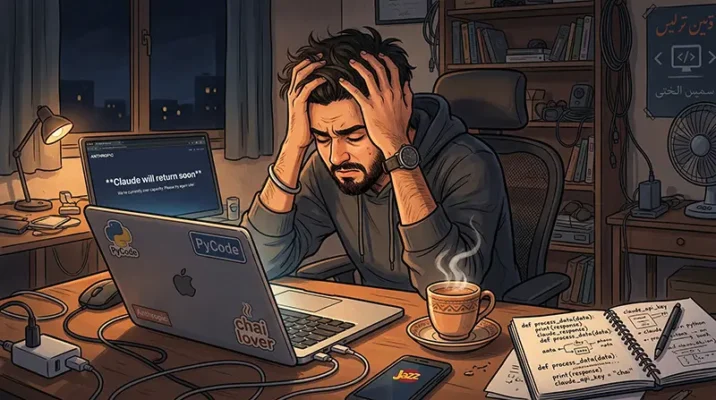

The Disruption of Claude Code

One of the biggest pain points during the outage was the complete failure of Claude Code, Anthropic’s highly praised AI-powered coding assistant. For developers in Pakistan using it right from their command line interface (CLI) to debug complex React applications or write Python scripts, the terminal suddenly started throwing upstream connect errors and connection termination warnings.

Because Claude Code login failures were tied to the same authentication pathways that crashed the main website, developers were locked out of their workspaces. Many users on Reddit’s r/ClaudeAI community lamented that tasks which normally took three minutes with AI assistance were suddenly delayed by hours [4].

Freelancers and Software Houses Face Delays

Pakistan is home to a massive freelance economy on platforms like Upwork and Fiverr. From copywriting and SEO optimization to full-stack web development, AI is the engine driving high output.

During the Claude temporary service disruption, many freelancers found themselves staring at the dreaded “Claude will return soon” message. Unlike larger corporations that might have enterprise redundancy, individual freelancers heavily rely on Anthropic‘s superior context window and reasoning capabilities (specifically Sonnet 4.6 and Opus 4.6). The sudden AI productivity drop served as a harsh wake-up call regarding operational dependencies.

How Anthropic Handled the Crisis

When a digital product fails, the company’s response dictates public sentiment. Fortunately, Anthropic’s incident management was relatively swift, transparent, and communicative.

Transparent Status Updates and Mitigation

Instead of leaving users in the dark, the Anthropic team utilized their official status page heavily. At 11:49 UTC, they officially recognized the Claude elevated errors. By 12:06 UTC, they updated that they were investigating API method failures.

They clearly communicated that while the core Claude API (api.anthropic.com) was actually stable, the bottleneck was entirely centered around claude.ai and the login/logout paths. By correctly diagnosing that this was an authentication traffic jam rather than a core model failure, the engineering team was able to start mitigating the traffic flow. By 15:50 UTC, after a few rolling fixes and monitoring periods, Anthropic successfully restored full service, releasing a statement thanking users for their patience during the “unprecedented demand” [1].

The Reality Check: Our Over-Reliance on AI

If there is one major takeaway from THE CLAUDE CRASH, it is a glaring reminder of the tech ecosystem’s fragility. We have rapidly transitioned into an era where AI is not just a luxury; it is critical infrastructure.

When a single point of failure at a company in San Francisco can stall coding sprints in Karachi, delay content publishing in London, and trigger panic across Reddit, we must ask ourselves: Are we too reliant on a handful of AI providers? Much like the recent CrowdStrike outage, the Anthropic network outage proves that speed and efficiency come at the cost of vulnerability. Building a robust workflow now means having redundancies—knowing how to switch seamlessly between CLAUDE, ChatGPT, or open-source local models like Llama 3 when the cloud inevitably rains errors.

Quick Takeaways: What We Learned from the Claude Outage

- Massive Service Disruption: On March 2, 2026, Anthropic suffered a major outage affecting

claude.ai, developer consoles, and Claude Code. - The Culprit Errors: Users primarily faced HTTP 500 (Internal Server Error) and HTTP 529 (System Overload) messages.

- A Political Trigger: The outage was fueled by unprecedented traffic after Anthropic refused a US military mandate to remove AI safety guardrails, sparking a massive public surge of support.

- App Store Dominance: Driven by the controversy, CLAUDE overtook ChatGPT as the #1 free app in the US, crippling the servers with new users.

- Authentication Bottleneck: The core AI models remained intact; the crash primarily occurred at the login/logout and authentication pathways.

- The Redundancy Lesson: The global AI productivity drop highlights the urgent need for developers and businesses to have backup AI systems in place.

Conclusion: Moving Forward in an AI-Dependent World

The events surrounding the Claude AI chatbot down disaster of March 2026 will likely be remembered as a pivotal moment in AI history. It was the day a principled stand against military use inadvertently broke the internet’s favorite new tool. Anthropic proved they possess the ethical backbone the industry desperately needs, but they also learned a hard lesson about the architectural demands of sudden, explosive scale.

For the general and tech-oriented community of Pakistan, this event is a potent reminder to diversify our digital toolkits. While CLAUDE is an incredibly powerful ally in our daily workflows, true resilience comes from not putting all our prompts in one basket. As Anthropic scales its servers to meet this new, elevated demand, we too must scale our adaptability.

Are you relying too heavily on a single AI provider? Now is the time to build your backup plan.

References

- Mashable: “Claude is down: What we know about the Anthropic outage.” (March 2, 2026).

- Benzinga: “Claude AI Crashes Repeatedly As Pentagon Ban Sparks Massive User Surge.” (March 2, 2026).

- The Straits Times: “Anthropic’s Claude chatbot goes down for thousands of users amid Pentagon feud.” (March 2, 2026).

- Reddit (r/ClaudeAI): “Major outage – claude.ai, claude code, API all down for me.” (March 2, 2026).

- CNET: “Is Claude Down? Anthropic Says It’s Resolved the AI Tool’s Outage.” (March 2, 2026).

Frequently Asked Questions (FAQs)

The recent outage was caused by a massive, unprecedented surge in user traffic. Following a high-profile refusal by Anthropic to remove safety guardrails for the US military, millions of new users flocked to the platform, causing a severe Anthropic server overload that temporarily crippled login pathways.

An HTTP 500 error indicates an internal server failure on Anthropic‘s backend, meaning a process crashed while handling your request. An HTTP 529 error specifically means the system is severely overloaded with traffic and cannot accept any new prompts until the queue clears up.

While the core AI API remained technically operational, the authentication servers tied to claude.ai and Claude Code login failures prevented developers from accessing their accounts, disrupting workflows for freelancers and software houses across Pakistan.

The most severe part of the disruption began around 11:49 UTC and lasted for roughly 3 to 4 hours before Anthropic implemented rolling fixes and fully resolved the “elevated errors” by 15:50 UTC.

The surge in popularity was driven by public support for Anthropic‘s ethical stance against US military demands. This virality pushed the app to the #1 spot on the Apple App Store, marking a massive win in the Claude vs ChatGPT App Store rivalry.

We Want to Hear from You!

Did you get caught in the great CLAUDE crash yesterday? How much did the Anthropic network outage disrupt your daily workflow, and did you have to switch back to ChatGPT or another tool to get things done?

Drop your experience in the comments below! If you found this breakdown helpful, please share it with your tech circles, WhatsApp groups, and fellow developers who might still be wondering why their terminal went crazy yesterday.