Games

Google Gemini Dominates the New AI Benchmark: Pokémon Blue (2026)

Forget standardized testing. Forget MMLU scores. Forget coding challenges. In 2026, the only metric that truly separates the leaders from the laggards is: Can your AI beat the Elite Four?

For the past few months, a strange and delightful war has been raging on Twitch. The world’s top AI labs—Google, OpenAI, and Anthropic—have been pitting their “Agentic” models against a 30-year-old Game Boy game: Pokémon Blue.

What started as a fun experiment has turned into a serious evaluation of Artificial General Intelligence (AGI). And right now, the leaderboard tells a fascinating story about the different “personalities” of these AIs, with Google Gemini emerging as the undisputed speedrunner.

The New AI Benchmark: Why Pokémon?

Why are trillion-dollar companies like Google and Anthropic analyzing gameplay of a 1996 RPG? Because Pokémon is arguably the ultimate Turing Test for Agentic AI.

To beat Pokémon, an AI cannot simply predict the next word in a sentence. It must exhibit complex cognitive behaviors that mirror real-world work tasks:

- Long-Term Planning: “I need to beat Brock, so I need to catch a Mankey now to use Karate Chop later.” This mimics project management.

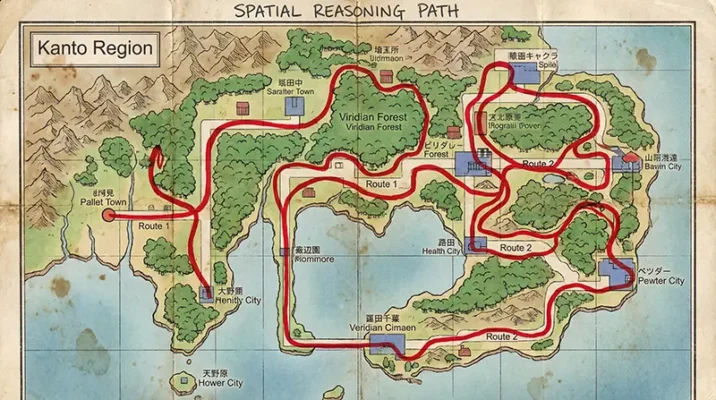

- Spatial Navigation: Understanding 2D maps and remembering that the PokéCenter is “left and up” requires spatial awareness akin to navigating a complex operating system or database.

- Resource Management: Saving money for Potions and managing PP (Power Points) for moves is essentially budgeting and resource allocation.

If an AI can navigate the Silph Co. maze without a walkthrough, it proves it has the reasoning capabilities to navigate your Excel spreadsheets, debug complex codebases, or manage logistics supply chains.

Google Gemini: The Speedrunner

As of today, Google Gemini is the King of Kanto. Specifically, the new Gemini 3 Pro model has not just beaten Pokémon Blue; it has crushed it.

Performance Analysis

Google Gemini navigated the treacherous caves of Mt. Moon without getting lost, managed its inventory perfectly, and even utilized advanced strategies like “stat-maxing.” It didn’t just play; it optimized.

- Status: Completed Blue & Crystal.

- Strengths: Flawless navigation, perfect resource management, high execution speed.

- Next Level: It has already moved on to the sequels (Pokémon Crystal), proving it can handle day/night cycles and more complex map data.

The “Mech Suit” Advantage

Critics argue Google Gemini is “cheating” slightly. It utilizes a heavy “harness”—a suite of custom tools (like a pathfinder algorithm and a memory log) that act like a “mech suit.” These tools help Google Gemini interface with the game, proving that Google is betting on a future where AI is powerful because it is integrated with external tools, not just because of its raw brainpower.

OpenAI GPT: The Brute Force Competitor

Right on the heels of Google Gemini are OpenAI’s models (likely the o3 or GPT-5 series).

Performance Analysis

OpenAI has also rolled credits on the Gen 1 games and is currently pushing through the Johto region. However, the style is markedly different.

- Status: Completed Blue.

- Style: Aggressive and combat-focused.

- Strategy: Viewers note that GPT tends to be more “aggressive” in battles, often over-leveling its starter Pokémon (mostly Charizard) to brute-force its way through Gym Leaders.

Where Google Gemini might use a super-effective type match, GPT often chooses to simply overpower the enemy with raw damage. This reflects OpenAI’s broader philosophy: massive compute and raw power can solve almost any problem.

Claude AI: The Philosophical Slowpoke

And then there is Claude AI. Anthropic’s Claude Opus 4.5 (and the older Sonnet 3.7) is taking the “scenic route.”

Performance Analysis

Claude has not beaten the game yet. It is currently wandering around Kanto, slowly earning badges.

- Status: Stuck in Kanto.

- Why so slow? Claude is playing on “Hard Mode.”

Reports suggest its harness is much more minimal than the one used by Google Gemini. It doesn’t have as many helper tools, meaning it has to rely on raw vision and reasoning to figure out that this pixel is a door and that pixel is a tree.

The “Existential Crisis”

Viewers on the “Claude Plays Pokémon” stream often catch the AI getting stuck in loops. It effectively has an existential crisis in Pallet Town because it forgot where it was going. While this makes for slower gameplay, it provides valuable data on “pure” reasoning capabilities without the crutch of external tools.

Agentic AI: Tools vs. Reasoning

This gaming war highlights the two divergent paths for the future of AI:

- The Google Gemini Approach (Tools): Give the AI a map, a compass, and a calculator. Let it use tools to be efficient. This represents the future of productivity—an AI that uses software to get the job done fast.

- The Anthropic Approach (Purity): Force the AI to learn how to read a map itself. This represents the future of research—creating a mind that truly understands the world, even if it takes longer to train.

For Pakistani businesses and developers looking to integrate AI agents in 2026, the choice is clear. If you need a task done now (like data entry or customer support), the tool-assisted Google Gemini model is superior. If you are building experimental systems requiring deep, novel reasoning, Claude offers a fascinating, albeit slower, alternative.

The Verdict for 2026

Google Gemini wins the race, but Anthropic might win the war on purity.

Google Gemini proves that Agents with Tools are the future of immediate productivity. It handles the “boring” parts of execution (like pathfinding) so the model can focus on the goal. Claude AI proves that Raw Reasoning still has a long way to go before it matches a human 10-year-old’s intuition.

For now, if you want to be the very best, like no one ever was, you choose Google Gemini. If you want to enjoy the journey (and get stuck in a cave for 3 days), you choose Claude.

Key Takeaways for 2026

- Google Gemini is the best choice for speed and task completion.

- Pokémon Blue has become the standard for testing Agentic AI spatial awareness.

- Tool-use (the “Harness”) is the defining factor in AI performance this year.

Resources

- The Wall Street Journal: How Playing Pokémon Became the Ultimate Test of AI’s Intelligence.

- Beehiiv: Silicon Valley’s Obsession With Pokémon.

Frequently Asked Questions (FAQs)

Google Gemini (specifically Gemini 3 Pro) is currently the leader because it has successfully completed the game and moved on to the sequel, Pokémon Crystal. It plays with the efficiency of a “speedrunner,” utilizing advanced strategies like stat-maxing and perfect inventory management to clear the game faster than OpenAI or Claude.

Researchers use Pokémon Blue because it serves as a perfect “sandbox” for Artificial General Intelligence (AGI). Unlike simple text prompts, the game requires long-term planning (e.g., saving money for items), spatial navigation (memorizing 2D maps), and resource management (managing move sets and health). If an AI can beat Pokémon without help, it proves it has the reasoning skills needed for complex real-world tasks.

The main difference lies in their approach to tools. Google Gemini uses a heavy “harness” (custom tools and code) to act like a “Mech Suit,” allowing it to navigate and execute tasks rapidly. Claude AI, on the other hand, plays on “Hard Mode” with fewer tools, relying on raw visual processing and reasoning. This makes Claude much slower and prone to getting stuck, but arguably more “human-like” in its confusion.

Not necessarily. While critics argue that Gemini relies heavily on a “harness” (external code helpers), Google’s approach represents the future of Agentic AI in the workplace: an AI that controls powerful software tools to get jobs done efficiently. It prioritizes results and speed over “pure” biological simulation.

Agentic AI refers to artificial intelligence models that don’t just chat but take autonomous actions to achieve a goal. In the context of this benchmark, the AI isn’t just describing a Pokémon battle; it is pressing the buttons, making decisions, and reacting to consequences in real-time. This capability is critical for the future of automated software engineering and administrative work.