News

Eightfold AI Lawsuit Alert: Is a “Secret Score” Killing Your Job Applications in 2026?

If you have applied for a job at a major tech company recently and faced instant rejection, the Eightfold AI lawsuit filed in January 2026 might explain why.

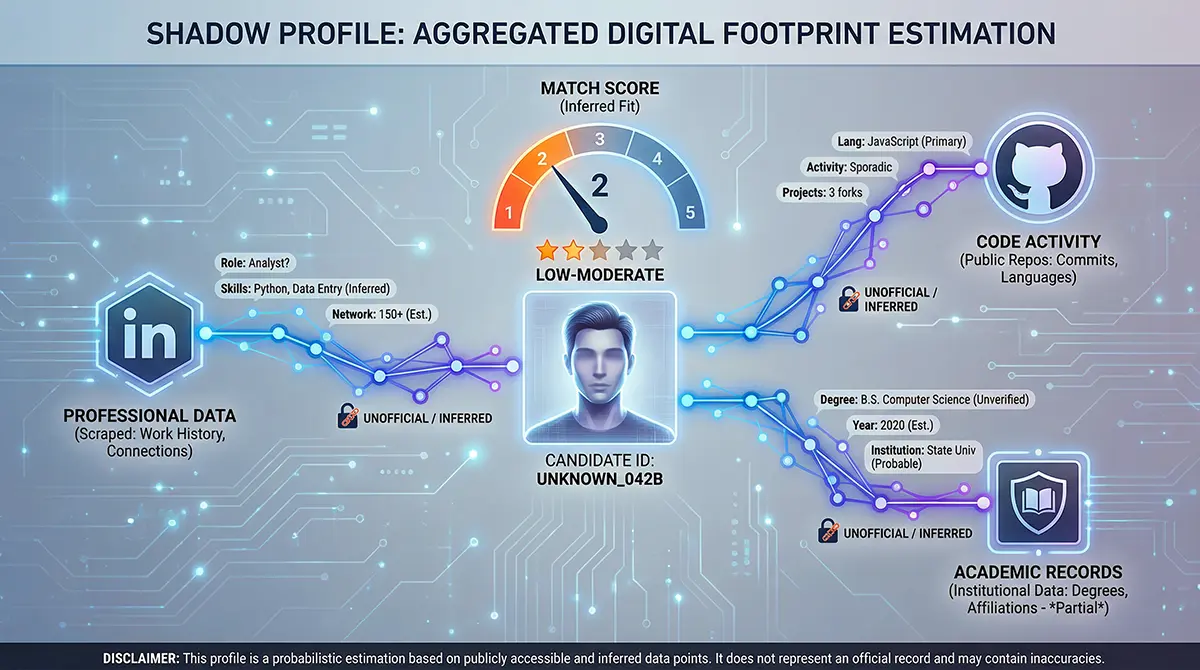

For years, job seekers have suspected that their resumes were being discarded by robots. Now, a landmark class-action lawsuit confirms our worst fears: an artificial intelligence platform used by Fortune 500 giants may be creating “shadow profiles” of candidates, assigning them secret scores, and rejecting them without a human ever seeing their application.

This isn’t just about AI hiring systems being efficient; it is about them potentially breaking the law. The lawsuit alleges that Eightfold AI is operating as an illegal “Consumer Reporting Agency,” similar to a credit bureau, but without the regulations that protect your rights.

Whether you are a developer in Pakistan applying for remote work or a project manager in California, this legal battle exposes the hidden mechanisms of job application rejection in the modern age.

What is the Eightfold AI Lawsuit?

On January 21, 2026, two rejected job seekers, Erin Kistler and Sruti Bhaumik, filed a class-action complaint in the Superior Court of California against Eightfold AI.

The core of the Eightfold AI lawsuit is distinct from typical discrimination cases. The plaintiffs are not suing the employers (like Microsoft or PayPal) directly; they are suing the AI provider itself. They allege that Eightfold AI scraped data from the web—including LinkedIn, GitHub, and social media—to build comprehensive dossiers on candidates without their consent.

Key Allegations:

- Illegal Data Scraping: Gathering personal data you never submitted to the employer.

- Shadow Profiles: Creating a hidden file on your “employability” that you cannot see or dispute.

- FCRA Violation: Acting as a credit reporting agency without adhering to the Fair Credit Reporting Act (FCRA).

This lawsuit is a direct challenge to the “Black Box” of algorithmic hiring. If successful, it could force the entire AI hiring industry to become transparent.

The “Secret Score” Explained: How AI Hiring Systems Work

Most candidates believe that when they submit a job application, the system scans the PDF for keywords. The reality described in the complaint is far more dystopian.

The lawsuit alleges that Eightfold’s “Talent Intelligence Platform” assigns every applicant a Match Score, usually ranging from 0 to 5 stars. This score is reportedly based not just on your resume, but on a massive aggregation of external data points.

What Goes Into a Shadow Profile?

According to the complaint, the AI might judge your “likelihood of success” based on:

- Old Social Profiles: Data you deleted or omitted to avoid ageism or bias.

- Inferred Traits: The AI might guess if you are a “team player” or “ambitious” based on your writing style or online activity.

- Predicted Career Path: Algorithms deciding your future potential based on historical patterns of other people.

If your Match Score is low, your application is allegedly filtered out before a human recruiter even opens the file.

Is Your Resume Being Ignored? The Match Score Reality

For job seekers, specifically those in competitive markets like Pakistan, the implications are massive. You might spend hours tailoring your resume and cover letter, only to be judged on data you didn’t even provide.

- The Problem: You cannot fix errors in a profile you don’t know exists.

- The Risk: If the AI hallucinates a detail—for example, assuming you lack a skill because it wasn’t on an old LinkedIn profile—you are rejected instantly.

This resume screening process creates a barrier where qualified candidates are rejected due to “algorithmic bias” or bad data, with no avenue for appeal.

The Legal Battle: FCRA Violations and Consumer Rights

The legal backbone of the Eightfold AI lawsuit is the Fair Credit Reporting Act (FCRA).

In the United States, if a company (like Equifax) keeps a file on your credit history that affects your ability to get a loan, you have the legal right to:

- See the file.

- Know when it is used against you.

- Dispute and correct inaccurate information.

The plaintiffs argue that Eightfold AI is effectively a “Credit Bureau for Labor.” By selling “background checks” on employability to companies, they should be subject to the FCRA.

Critical Insight: If the court agrees, Eightfold AI—and potentially other AI hiring systems—would legally have to show you your “score” and allow you to fix it.

Why This Matters for Job Seekers in Pakistan and Globally

While the lawsuit was filed in California, the impact is global. Many Pakistani professionals apply for remote roles at international tech giants.

Pakistan Search Data indicates a high interest in “AI,” “Microsoft,” and “Job Applications.” Here is why this lawsuit is critical for you:

- Global Platforms: The same Eightfold AI algorithms used in the US are likely used to screen global talent for remote positions.

- Data Privacy: If data scraping is ruled illegal in this context, it could change how international companies process applications from Pakistan.

- Algorithmic Bias: AI models trained on Western data often penalize international degrees or non-Western career gaps. A victory here could reduce that bias.

The Companies Involved: Microsoft, PayPal, and More

The lawsuit highlights that Eightfold AI is a “unicorn” platform used by a significant portion of the Fortune 500. Specific companies mentioned in the context of the plaintiffs’ rejections include:

- Microsoft

- PayPal

- Salesforce

- Bayer

If you applied to these companies recently and received an instant, generic rejection email, your application may have been processed by the very Match Score system currently under fire.

Protect Yourself: Steps to Take Today

While we wait for the verdict, here is how you can navigate the current landscape of AI hiring:

- Audit Your Digital Footprint: Since data scraping is a key component, ensure your LinkedIn, GitHub, and portfolio sites are up-to-date and consistent with your resume.

- Optimize for ATS: Continue to use standard keywords in your resume to ensure high relevance, even if a shadow profile exists.

- Monitor the Case: If the plaintiffs win, there may be a portal opened for class-action members to claim damages or view their files.

- Request Feedback: innovative candidates are now adding disclaimers in their cover letters explicitly opting out of “automated profiling,” though the legal weight of this is still untested.

Conclusion

The Eightfold AI lawsuit is more than just a legal dispute; it is a battle for the soul of the recruitment industry. It challenges the notion that a machine can secretly judge a human’s worthiness for a career.

If the court rules that these “Talent Intelligence Platforms” are subject to the FCRA, the era of the secret “Black Box” hiring may finally come to an end. For the millions of job seekers rejected by an algorithm they couldn’t see, that transparency cannot come soon enough.

Resources

- Reuters: AI company Eightfold sued for helping companies secretly score job seekers.

- HR-Brew: AI-powered hiring platform Eightfold AI faces lawsuit over hiring data used to rate candidates.

Frequently Asked Questions (FAQ)

The class-action lawsuit, filed in January 2026 (Kistler v. Eightfold AI Inc.), alleges that Eightfold AI creates illegal “shadow profiles” of job applicants. Plaintiffs claim the company scrapes personal data from the web to assign “Match Scores” to candidates without their consent, violating the Fair Credit Reporting Act (FCRA).

Eightfold AI is used by many Fortune 500 companies to screen talent. The lawsuit specifically mentions rejection experiences related to applications at Microsoft, PayPal, Salesforce, and Bayer.

A “Match Score” is a rating (typically 0 to 5 stars) assigned to a job seeker by Eightfold’s AI. Unlike a standard resume review, this score is allegedly calculated using external data scraped from sites like LinkedIn and GitHub to predict a candidate’s “likelihood of success” before a human recruiter ever sees the application.

Currently, job seekers cannot see or access their shadow profiles. The core argument of the lawsuit is that Eightfold AI operates as a “Consumer Reporting Agency” (like a credit bureau) but fails to provide the transparency required by law, meaning you cannot currently view or correct your data.

Using AI for screening is not illegal in itself. However, the lawsuit argues that if an AI company sells data-driven reports on individuals (background checks) to employers, they must comply with the FCRA. This requires them to notify the candidate and allow them to dispute inaccurate information, which the plaintiffs claim Eightfold failed to do.